Posts Tagged Artificial Intelligence

Navigating the World of Generative AI: A Guide to Essential Terminology

Posted by Gary A. Stafford in AI/ML, Machine Learning, Technology Consulting on April 9, 2023

Learn the essential terms and concepts you need to know to navigate the rapidly evolving world of generative AI

Generative AI is a fascinating and rapidly evolving field that has the potential to transform the way we interact with technology. However, with so much buzz and hype surrounding this topic, making sense of it all can be challenging. In this article, we’ll cut through the noise and gain a clear understanding of the essential terminology you need to know to navigate the world of generative AI.

According to a variety of sources, including McKinsey & Company and Vox Media, the critical difference between generative AI and other emerging technologies is that millions of people can — and already are — using it to create new content, such as text, photos, video, code, and 3D renderings, from data it is trained on. Recent breakthroughs in the field have the potential to drastically change the way we approach content creation. This has led to widespread excitement and some understandable apprehension about the potential for generative AI to impact virtually every aspect of society and disrupt industries, including media and entertainment, healthcare and life sciences, education, advertising, legal services, and finance.

Even if your current role is not in technology, it is highly likely that generative AI will have a direct impact on both your personal and professional life.

Gary Stafford

Even if your current role is not in technology, it is highly likely that generative AI will have a direct impact on both your personal and professional life. Familiarizing yourself with basic terminology related to generative AI can help you better comprehend the discussions on social media and in the news.

Terminology

Let’s explore the following terminology (in alphabetical order):

- Artificial General Intelligence (AGI)

- Artificial Intelligence (AI)

- ChatGPT

- DALL·E

- Deep Learning (DL)

- Generative AI

- Generative Pre-trained Transformer (GPT)

- Intelligence Amplification (IA)

- Large Language Model (LLM)

- Machine Learning (ML)

- Neural Network

- OpenAI

- Prompt Engineering

- Reinforcement Learning with Human Feedback (RLHF).

Below is a knowledge graph, created with OpenAI ChatGPT, showing the approximate relationships between the post’s terms.

Artificial General Intelligence (AGI)

According to the all-new Bing Chat, based on ChatGPT, artificial general intelligence (AGI) is the ability of an intelligent agent to understand or learn any intellectual task that human beings or other animals can. It is a primary goal of some artificial intelligence research and a common topic in science fiction and Futurism. According to Forbes, which also prompted ChatGPT, Artificial General Intelligence (AGI) refers to a theoretical type of artificial intelligence that possesses human-like cognitive abilities, such as the ability to learn, reason, solve problems, and communicate in natural language.

Eliezer Yudkowsky is an American researcher, writer, and philosopher on the topic of AI. The podcast Eliezer Yudkowsky: Dangers of AI and the End of Human Civilization, by prominent MIT Research Scientist Lex Fridman, explores various aspects of artificial general intelligence against the backdrop of the recent release of OpenAI’s GPT-4.

Artificial Intelligence (AI)

According to the Brookings Institute, AI is generally thought to refer to machines that respond to stimulation consistent with traditional responses from humans, given the human capacity for contemplation, judgment, and intention. Similarly, according to the U.S. Department of State, the term artificial intelligence refers to a machine-based system that can, for a given set of human-defined objectives, make predictions, recommendations, or decisions influencing real or virtual environments.

ChatGPT

ChatGPT, according to ChatGPT, is a large language model developed by OpenAI. I was trained on a massive dataset of human-written text using a deep neural network (DNN) architecture called GPT (Generative Pre-trained Transformer). Its purpose is to generate human-like responses to questions and prompts, engage in conversations, and perform various language-related tasks. It is a virtual assistant capable of understanding and generating natural language.

DALL·E

According to Wikipedia, DALL·E is a deep learning model developed by OpenAI to generate digital images from natural language descriptions, called prompts. DALL·E is a portmanteau of the names of the animated robot Pixar character WALL-E and the Spanish surrealist artist Salvador Dalí. According to OpenAI, DALL·E is an AI system that can create realistic images and art from a description in natural language. OpenAI introduced DALL·E in January 2021. One year later, in April 2022, they announced their newest system, DALL·E 2, which generates more realistic and accurate images with 4x greater resolution. DALL·E 2 can create original, realistic images and art from a text description. It can combine concepts, attributes, and styles.

Deep Learning (DL)

According to IBM, deep learning is a subset of machine learning (ML), which is essentially a neural network with three or more layers. These neural networks attempt to simulate the behavior of the human brain — albeit far from matching its ability — allowing it to “learn” from large amounts of data. While a neural network with a single layer can still make approximate predictions, additional hidden layers can help to optimize and refine for accuracy.

Generative AI

According to Wikipedia, generative artificial intelligence (AI), aka generative AI, is a type of AI system capable of generating text, images, or other media in response to prompts. Generative AI systems use generative models such as large language models (LLMs) to statistically sample new data based on the training data set used to create them.

Generative Pre-trained Transformer (GPT)

According to ChatGPT, Generative Pre-trained Transformer (GPT) is a deep learning architecture used for natural language processing (NLP) tasks, such as text generation, summarization, and question-answering. It uses a transformer neural network architecture with a self-attention mechanism, allowing the model to understand each word’s context in a sentence or text. The success of GPT models lies in their ability to generate natural-sounding and coherent text similar to human-written language. The term “pre-trained” refers to the fact that the model is trained on large amounts of unlabeled text data, such as books or web pages, to learn general language patterns and features, before being fine-tuned on smaller labeled datasets for specific tasks.

According to ZDNET, GPT-4, announced on March 14, 2023, is the newest version of OpenAI’s language model systems. Its previous version, GPT 3.5, powered the company’s wildly popular ChatGPT chatbot when it launched in November 2022. According to OpenAI, GPT-4 is the latest milestone in OpenAI’s effort to scale up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while less capable than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks.

Intelligence Amplification

According to Wikipedia, intelligence amplification (IA) (aka cognitive augmentation, machine-augmented intelligence, or enhanced intelligence) refers to the effective use of information technology in augmenting human intelligence. Similarly, Harvard Business Review describes intelligence amplification as the use of technology to augment human intelligence. And a paradigm shift is on the horizon, where new devices will offer less intrusive, more intuitive ways to amplify our intelligence.

In his latest book, Impromptu: Amplifying Our Humanity Through AI, co-authored by ChatGPT-4, Greylock general partner Reid Hoffman discusses the subject of intelligence amplification and AI’s ability to amplify human ability. The topic was also explored in Hoffman’s interview with OpenAI CEO Sam Altman on Greylock’s podcast series AI Field Notes.

Large Language Model (LLM)

According to Wikipedia, a large language model (LLM) is a language model consisting of a neural network with many parameters (typically billions of weights or more), trained on large quantities of unlabelled text using self-supervised learning. Though the term large language model has no formal definition, it often refers to deep learning models having a parameter count on the order of billions or more.

Machine Learning (ML)

According to MIT, machine learning (ML) is a subfield of artificial intelligence, which is broadly defined as the capability of a machine to imitate intelligent human behavior. Artificial intelligence systems are used to perform complex tasks in a way that is similar to how humans solve problems. The function of a machine learning system can be descriptive, meaning that the system uses the data to explain what happened; predictive, meaning the system uses the data to predict what will happen; or prescriptive, meaning the system will use the data to make suggestions about what action to take.

Neural Network

According to MathWorks, a neural network (aka artificial neural network or ANN) is an adaptive system that learns by using interconnected nodes or neurons in a layered structure that resembles a human brain. A neural network can learn from data to be trained to recognize patterns, classify data, and forecast future events. Similarly, according to AWS, a neural network is a method in artificial intelligence that teaches computers to process data in a way that is inspired by the human brain. It is a type of machine learning process called deep learning that uses interconnected nodes or neurons in a layered structure that resembles the human brain. It creates an adaptive system that computers use to learn from their mistakes and improve continuously.

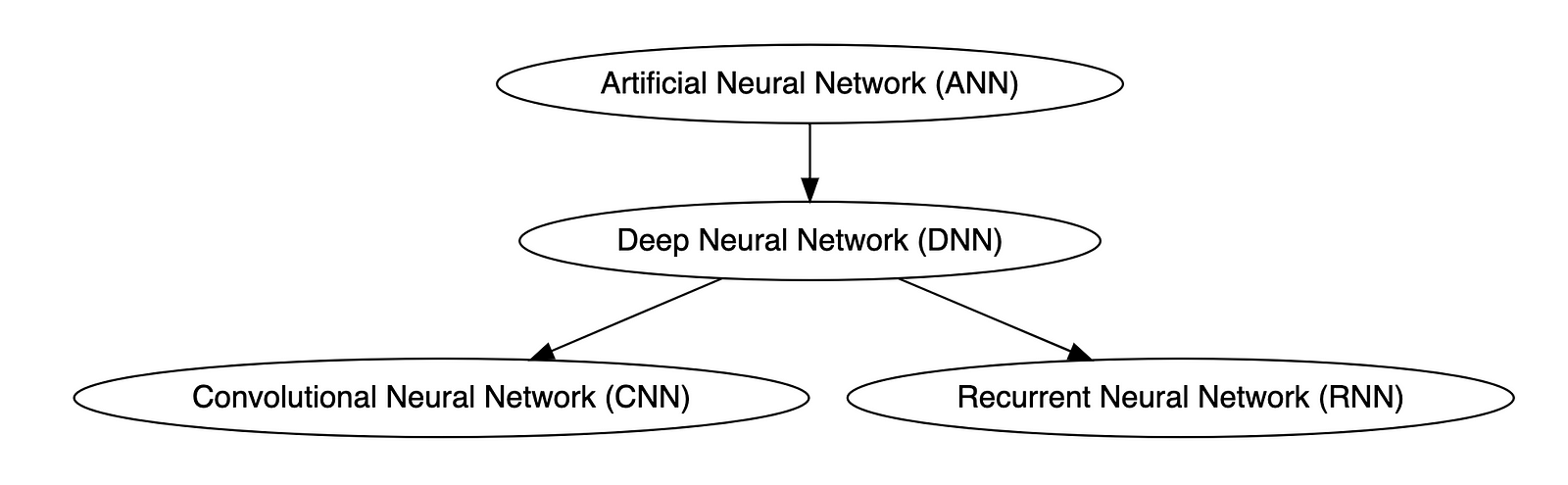

Types of Neural Networks

Deep neural networks (DNNs) are improved versions of conventional artificial neural networks (ANNs) with multiple layers. While ANNs consist of one or two hidden layers to process data, DNNs contain multiple layers between the input and output layers. Convolutional neural networks (CNNs) are another kind of DNN. CNNs have a convolution layer, which uses filters to convolve an area of input data into a smaller area, detecting important or specific parts within the area. Recurrent neural networks (RNNs) can be considered a type of DNN. DNNs are neural networks with multiple layers between the input and output layers. RNNs can have multiple layers and can be used to process sequential data, making them a type of DNN.

OpenAI

OpenAI is a San Francisco-based AI research and deployment company whose mission is to “ensure that artificial general intelligence benefits all of humanity.” According to Wikipedia, OpenAI was founded in 2015 by current CEO Sam Altman, Greylock general partner Reid Hoffman, Y Combinator founding partner Jessica Livingston, Elon Musk, Ilya Sutskever, Peter Thiel, and others. OpenAI’s current products include GPT-4, DALL·E 2, Whisper, ChatGPT, and OpenAI Codex.

Prompt Engineering

According to Cohere, prompting (aka prompt engineering) is at the heart of working with LLMs. The prompt provides context for the text we want the model to generate. The prompts we create can be anything from simple instructions to more complex pieces of text, and they are used to encourage the model to produce a specific type of output. Cohere’s Generative AI with Cohere blog post series is an excellent resource on the topic of Prompting. Similarly, according to Dataconomy, using prompts to get the desired result from an AI tool is known as AI prompt engineering. A prompt can be a statement or a block of code, but it can also just be a string of words. Similar to how you may prompt a person as a starting point for writing an essay, you can use prompts to teach an AI model to produce the desired results when given a specific task.

Reinforcement Learning with Human Feedback (RLHF)

According to Scale AI in their blog, Why is ChatGPT so good?, instead of simply predicting the next word(s), large language models (LLMs) can now follow human instructions and provide useful responses. These advancements are made possible by fine-tuning them with specialized instruction datasets and a technique called reinforcement learning with human feedback (RLHF). Similarly, according to Hugging Face, RLHF (aka RL from human preferences) uses methods from reinforcement learning to directly optimize LLMs with human feedback. RLHF has enabled language models to begin to align a model trained on a geprogneral corpus of text data to that of complex human values.

Ready for More?

Mastered all the terminology, ready for more? Here are some additional generative AI terms for you to learn:

- Alignment (AI Alignment, Aligned AI)

- Attention (Self-attention)

- Backpropagation

- Embeddings

- Foundational Model

- Next-Token Predictors

- Parameter

- Reinforcement Learning (RL)

- Self-Supervised Learning (SSL)

- Temperature

- Tokenization

- Transformer

- Vector

- Weight

🔔 To keep up with future content, follow Gary Stafford on LinkedIn.

This blog represents my viewpoints and not those of my employer, Amazon Web Services (AWS). All product names, logos, and brands are the property of their respective owners.

Ten Ways to Leverage Generative AI for Development on AWS

Posted by Gary A. Stafford in AI/ML, AWS, Bash Scripting, Big Data, Build Automation, Client-Side Development, Cloud, DevOps, Enterprise Software Development, Kubernetes, Python, Serverless, Software Development, SQL on April 3, 2023

Explore ten ways you can use Generative AI coding tools to accelerate development and increase your productivity on AWS

Generative AI coding tools are a new class of software development tools that leverage machine learning algorithms to assist developers in writing code. These tools use AI models trained on vast amounts of code to offer suggestions for completing code snippets, writing functions, and even entire blocks of code.

Quote generated by OpenAI ChatGPT

Introduction

Combining the latest Generative AI coding tools with a feature-rich and extensible IDE and your coding skills will accelerate development and increase your productivity. In this post, we will look at ten examples of how you can use Generative AI coding tools on AWS:

- Application Development: Code, unit tests, and documentation

- Infrastructure as Code (IaC): AWS CloudFormation, AWS CDK, Terraform, and Ansible

- AWS Lambda: Serverless, event-driven functions

- IAM Policies: AWS IAM policies and Amazon S3 bucket policies

- Structured Query Language (SQL): Amazon RDS, Amazon Redshift, Amazon Athena, and Amazon EMR

- Big Data: Apache Spark and Flink on Amazon EMR, AWS Glue, and Kinesis Data Analytics

- Configuration and Properties files: Amazon MSK, Amazon EMR, and Amazon OpenSearch

- Apache Airflow DAGs: Amazon MWAA

- Containerization: Kubernetes resources, Helm Charts, Dockerfiles for Amazon EKS

- Utility Scripts: PowerShell, Bash, Shell, and Python

Choosing a Generative AI Coding Tool

In my recent post, Accelerating Development with Generative AI-Powered Coding Tools, I reviewed six popular tools: ChatGPT, Copilot, CodeWhisperer, Tabnine, Bing, and ChatSonic.

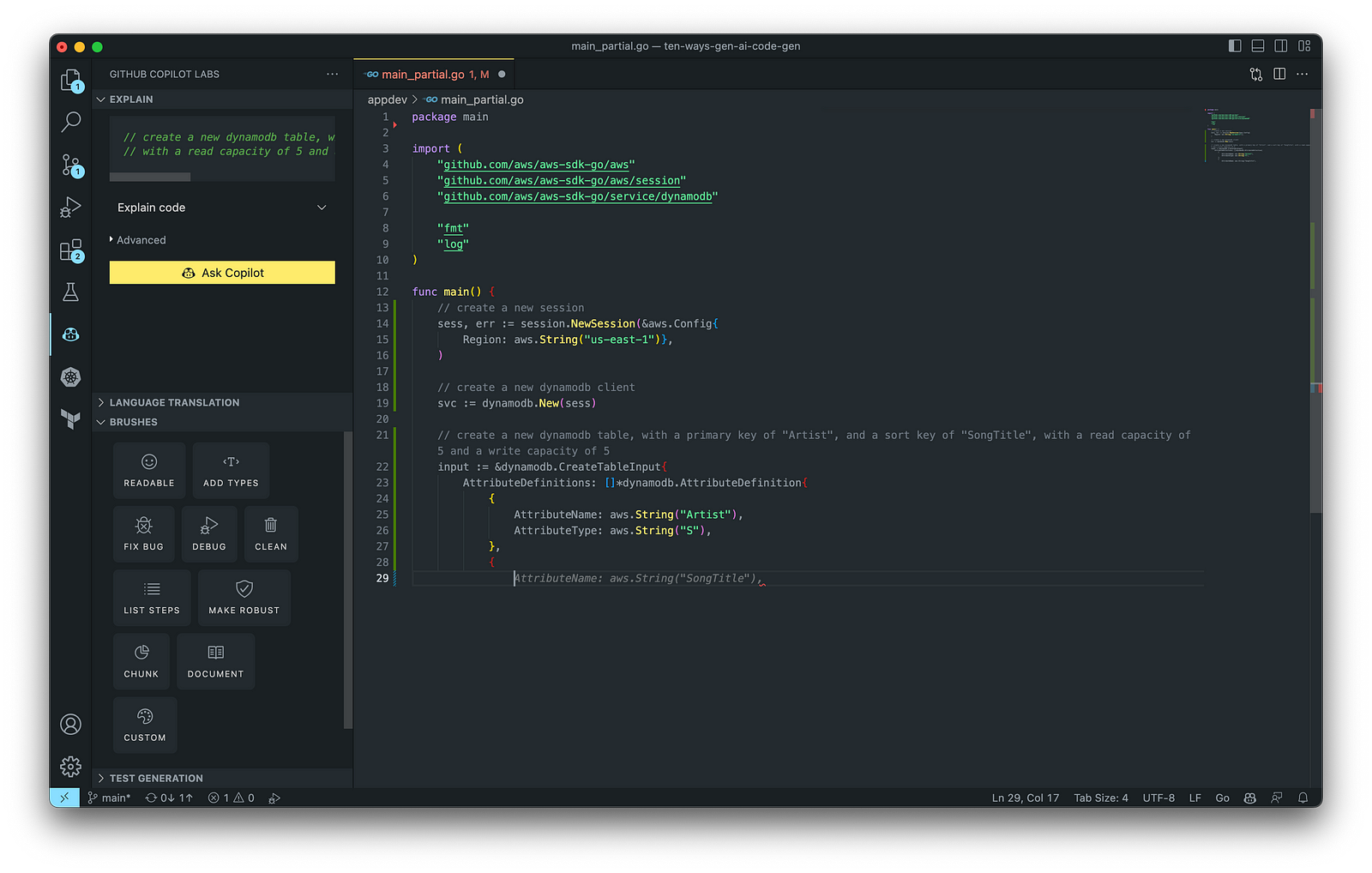

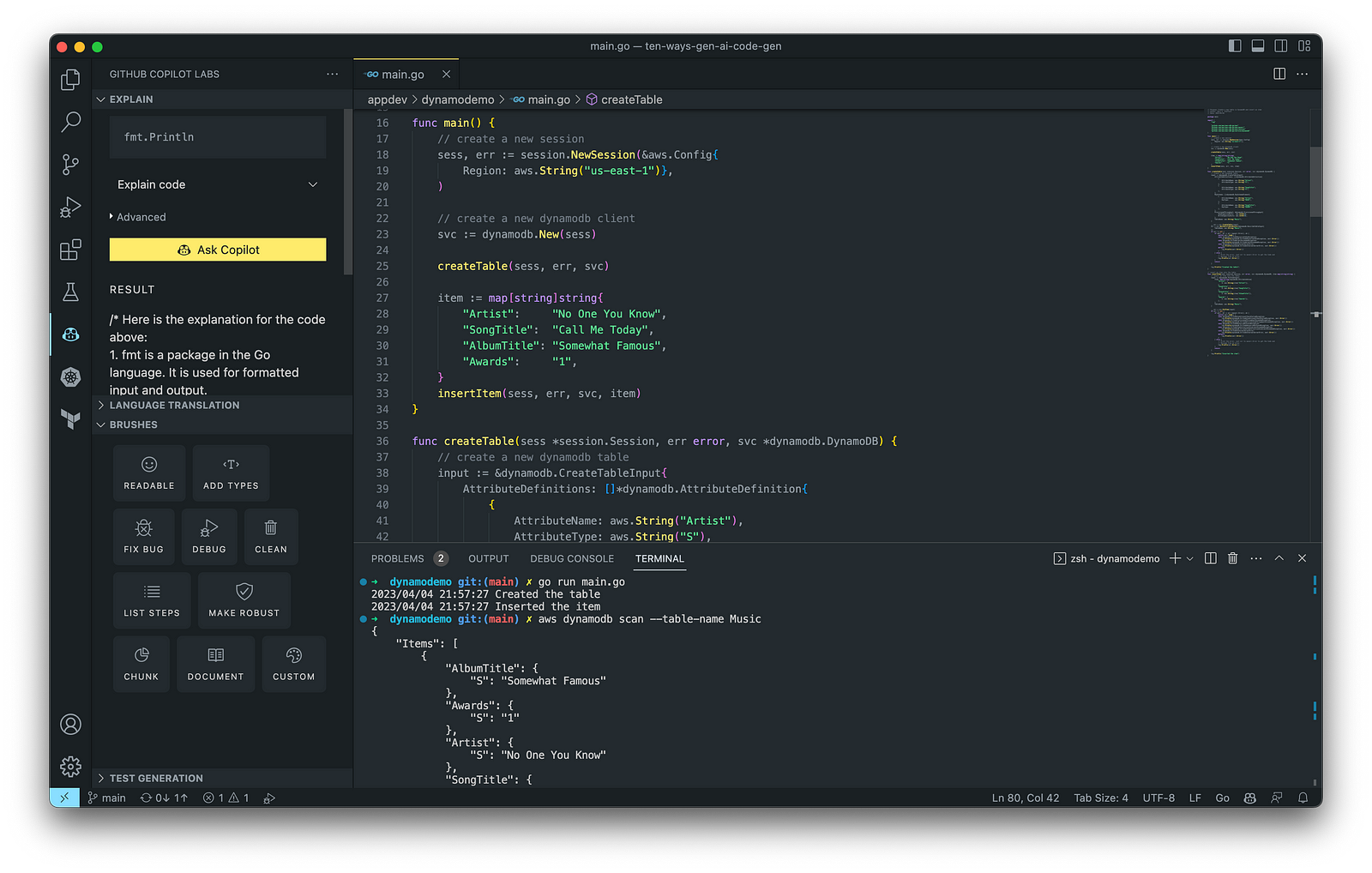

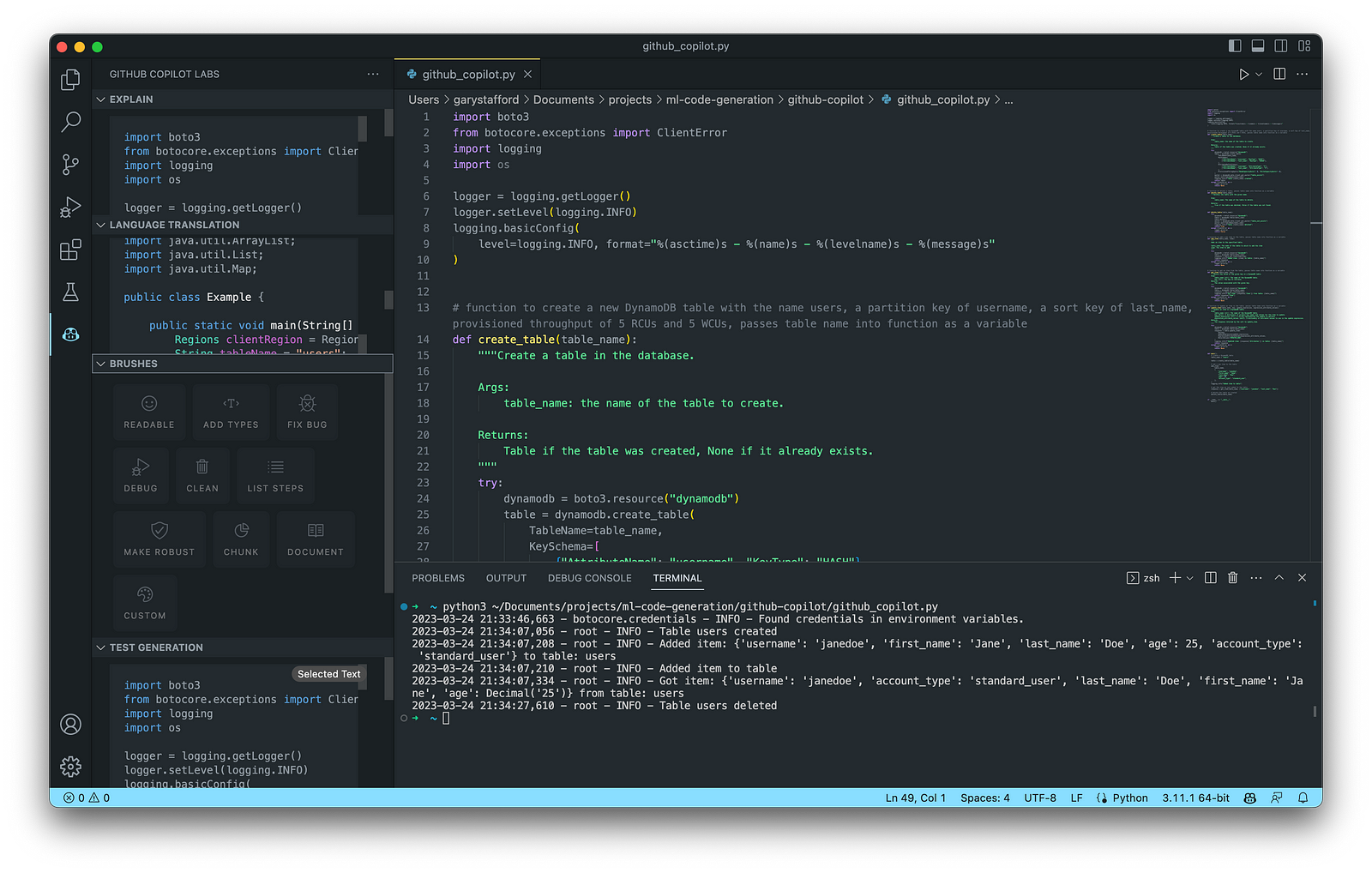

For this post, we will use GitHub Copilot, powered by OpenAI Codex, a new AI system created by OpenAI. Copilot suggests code and entire functions in real-time, right from your IDE. Copilot is trained in all languages that appear in GitHub’s public repositories. GitHub points out that the quality of suggestions you receive may depend on the volume and diversity of training data for that language. Similar tools in this category are limited in the number of languages they support compared to Copilot.

Copilot is currently available as an extension for Visual Studio Code, Visual Studio, Neovim, and JetBrains suite of IDEs. The GitHub Copilot extension for Visual Studio Code (VS Code) already has 4.8 million downloads, and the GitHub Copilot Nightly extension, used for this post, has almost 280,000 downloads. I am also using the GitHub Copilot Labs extension in this post.

Ten Ways to Leverage Generative AI

Take a look at ten examples of how you can use Generative AI coding tools to increase your development productivity on AWS. All the code samples in this post can be found on GitHub.

1. Application Development

According to GitHub, trained on billions of lines of code, GitHub Copilot turns natural language prompts into coding suggestions across dozens of languages. These features make Copilot ideal for developing applications, writing unit tests, and authoring documentation. You can use GitHub Copilot to assist with writing software applications in nearly any popular language, including Go.

The final application, which uses the AWS SDK for Go to create an Amazon DynamoDB table, shown below, was formatted using the Go extension by Google and optimized using the ‘Readable,’ ‘Make Robust,’ and ‘Fix Bug’ GitHub Code Brushes.

Generating Unit Tests

Using JavaScript and TypeScript, you can take advantage of TestPilot to generate unit tests based on your existing code and documentation. TestPilot, part of GitHub Copilot Labs, uses GitHub Copilot’s AI technology.

2. Infrastructure as Code (IaC)

Widespread Infrastructure as Code (IaC) tools include Pulumi, AWS CloudFormation, Azure ARM Templates, Google Deployment Manager, AWS Cloud Development Kit (AWS CDK), Microsoft Bicep, and Ansible. Many IaC tools, except AWS CDK, use JSON- or YAML-based domain-specific languages (DSLs).

AWS CloudFormation

AWS CloudFormation is an Infrastructure as Code (IaC) service that allows you to easily model, provision, and manage AWS and third-party resources. The CloudFormation template is a JSON or YAML formatted text file. You can use GitHub Copilot to assist with writing IaC, including AWS CloudFormation in either JSON or YAML.

You can use the YAML Language Support by Red Hat extension to write YAML in VS Code.

VS Code has native JSON support with JSON Schema Store, which includes AWS CloudFormation. VS Code uses the CloudFormation schema for IntelliSense and flag schema errors in templates.

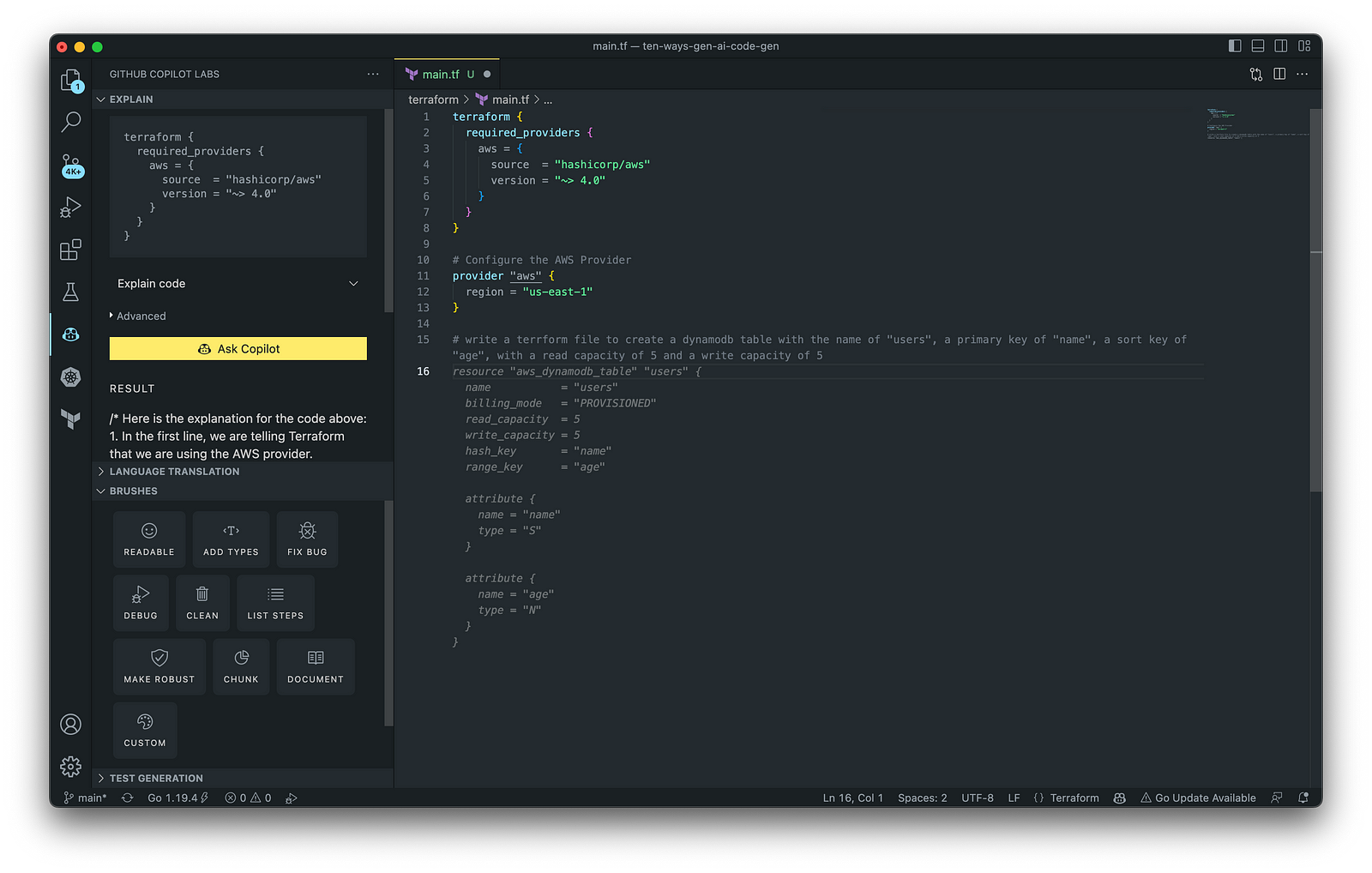

HashiCorp Terraform

In addition to AWS CloudFormation, HashiCorp Terraform is an extremely popular IaC tool. According to HashiCorp, Terraform lets you define resources and infrastructure in human-readable, declarative configuration files and manages your infrastructure’s lifecycle. Using Terraform has several advantages over manually managing your infrastructure.

Terraform plugins called providers let Terraform interact with cloud platforms and other services via their application programming interfaces (APIs). You can use the AWS Provider to interact with the many resources supported by AWS.

3. AWS Lambda

Lambda, according to AWS, is a serverless, event-driven compute service that lets you run code for virtually any application or backend service without provisioning or managing servers. You can trigger Lambda from over 200 AWS services and software as a service (SaaS) applications and only pay for what you use. AWS Lambda natively supports Java, Go, PowerShell, Node.js, C#, Python, and Ruby. AWS Lambda also provides a Runtime API allowing you to use additional programming languages to author your functions.

You can use GitHub Copilot to assist with writing AWS Lambda functions in any of the natively supported languages. You can further optimize the resulting Lambda code with GitHub’s Code Brushes.

The final Python-based AWS Lambda, below, was formatted using the Black Formatter and Flake8 extensions and optimized using the ‘Readable,’ ‘Debug,’ ‘Make Robust,’ and ‘Fix Bug’ GitHub Code Brushes.

You can easily convert the Python-based AWS Lambda to Java using GitHub Copilot Lab’s ability to translate code between languages. Install the GitHub Copilot Labs extension for VS Code to try out language translation.

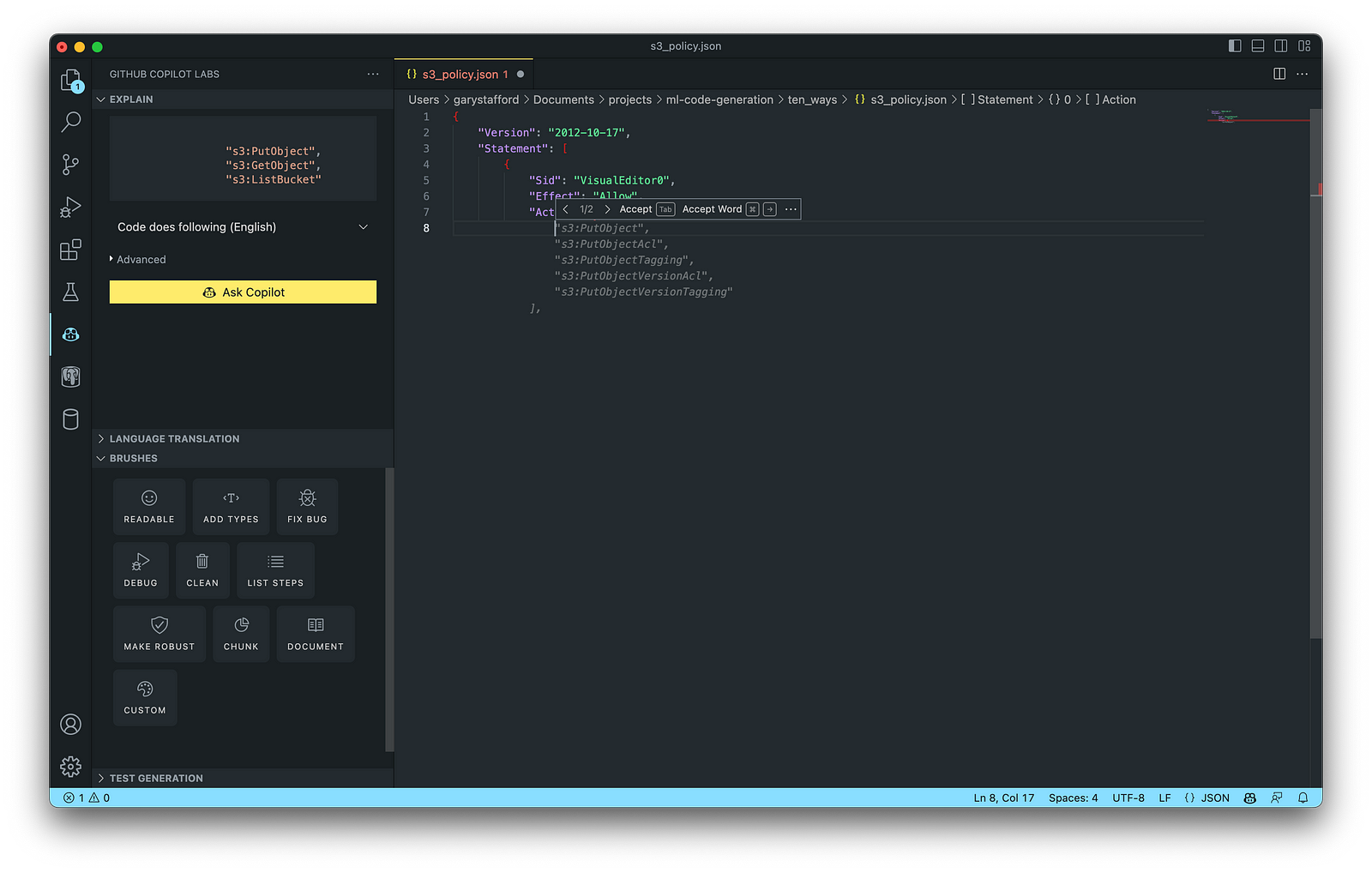

4. IAM Policies

AWS Identity and Access Management (AWS IAM) is a web service that helps you securely control access to AWS resources. According to AWS, you manage access in AWS by creating policies and attaching them to IAM identities (users, groups of users, or roles) or AWS resources. A policy is an object in AWS that defines its permissions when associated with an identity or resource. IAM policies are stored on AWS as JSON documents. You can use GitHub Copilot to assist in writing IAM Policies.

The final AWS IAM Policy, below, was formatted using VS Code’s built-in JSON support.

5. Structured Query Language (SQL)

SQL has many use cases on AWS, including Amazon Relational Database Service (RDS) for MySQL, PostgreSQL, MariaDB, Oracle, and SQL Server databases. SQL is also used with Amazon Aurora, Amazon Redshift, Amazon Athena, Apache Presto, Trino (PrestoSQL), and Apache Hive on Amazon EMR.

You can use IDEs like VS Code with its SQL dialect-specific language support and formatted extensions. You can further optimize the resulting SQL statements with GitHub’s Code Brushes.

The final PostgreSQL script, below, was formatted using the Sql Formatter extension and optimized using the ‘Readable’ and ‘Fix Bug’ GitHub Code Brushes.

6. Big Data

Big Data, according to AWS, can be described in terms of data management challenges that — due to increasing volume, velocity, and variety of data — cannot be solved with traditional databases. AWS offers managed versions of Apache Spark, Apache Flink, Apache Zepplin, and Jupyter Notebooks on Amazon EMR, AWS Glue, and Amazon Kinesis Data Analytics (KDA).

Apache Spark

According to their website, Apache Spark is a multi-language engine for executing data engineering, data science, and machine learning on single-node machines or clusters. Spark jobs can be written in various languages, including Python (PySpark), SQL, Scala, Java, and R. Apache Spark is available on a growing number of AWS services, including Amazon EMR and AWS Glue.

The final Python-based Apache Spark job, below, was formatted using the Black Formatter extension and optimized using the ‘Readable,’ ‘Document,’ ‘Make Robust,’ and ‘Fix Bug’ GitHub Code Brushes.

7. Configuration and Properties Files

According to TechTarget, a configuration file (aka config) defines the parameters, options, settings, and preferences applied to operating systems, infrastructure devices, and applications. There are many examples of configuration and properties files on AWS, including Amazon MSK Connect (Kafka Connect Source/Sink Connectors), Amazon OpenSearch (Filebeat, Logstash), and Amazon EMR (Apache Log4j, Hive, and Spark).

Kafka Connect

Kafka Connect is a tool for scalably and reliably streaming data between Apache Kafka and other systems. It makes it simple to quickly define connectors that move large collections of data into and out of Kafka. AWS offers a fully-managed version of Kafka Connect: Amazon MSK Connect. You can use GitHub Copilot to write Kafka Connect Source and Sink Connectors with Kafka Connect and Amazon MSK Connect.

The final Kafka Connect Source Connector, below, was formatted using VS Code’s built-in JSON support. It incorporates the Debezium connector for MySQL, Avro file format, schema registry, and message transformation. Debezium is a popular open source distributed platform for performing change data capture (CDC) with Kafka Connect.

8. Apache Airflow DAGs

Apache Airflow is an open-source platform for developing, scheduling, and monitoring batch-oriented workflows. Airflow’s extensible Python framework enables you to build workflows connecting with virtually any technology. DAG (Directed Acyclic Graph) is the core concept of Airflow, collecting Tasks together, organized with dependencies and relationships to say how they should run.

Amazon Managed Workflows for Apache Airflow (Amazon MWAA) is a managed orchestration service for Apache Airflow. You can use GitHub Copilot to assist in writing DAGs for Apache Airflow, to be used with Amazon MWAA.

The final Python-based Apache Spark job, below, was formatted using the Black Formatter extension. Unfortunately, based on my testing, code optimization with GitHub’s Code Brushes is impossible with Airflow DAGs.

9. Containerization

According to Check Point Software, Containerization is a type of virtualization in which all the components of an application are bundled into a single container image and can be run in isolated user space on the same shared operating system. Containers are lightweight, portable, and highly conducive to automation. AWS describes containerization as a software deployment process that bundles an application’s code with all the files and libraries it needs to run on any infrastructure.

AWS has several container services, including Amazon Elastic Container Service (Amazon ECS), Amazon Elastic Kubernetes Service (Amazon EKS), Amazon Elastic Container Registry (Amazon ECR), and AWS Fargate. Several code-based resources can benefit from a Generative AI coding tool like GitHub Copilot, including Dockerfiles, Kubernetes resources, Helm Charts, Weaveworks Flux, and ArgoCD configuration.

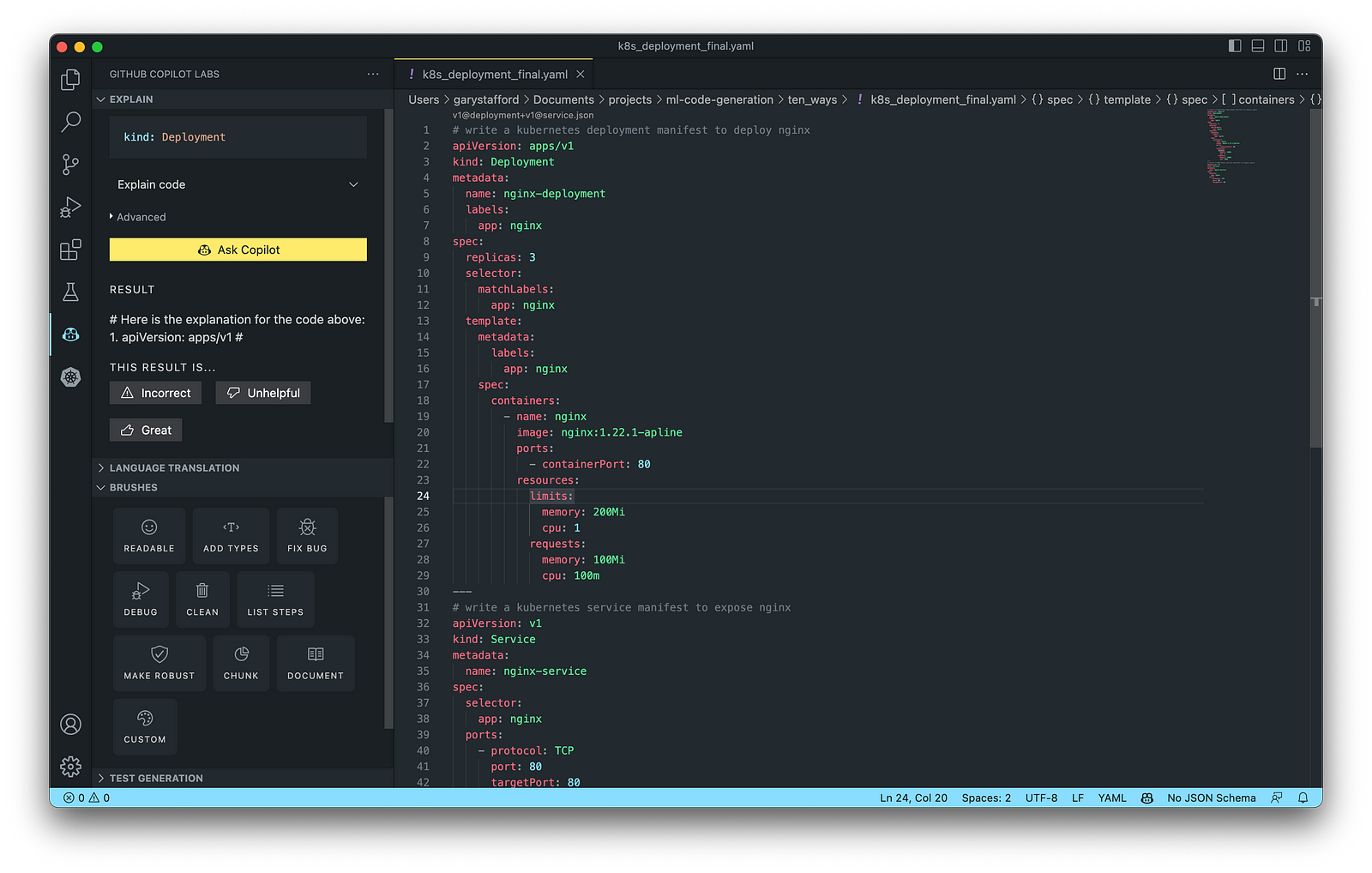

Kubernetes

Kubernetes objects are represented in the Kubernetes API and expressed in YAML format. Below is a Kubernetes Deployment resource file, which creates a ReplicaSet to bring up multiple replicas of nginx Pods.

The final Kubernetes resource file below contains Deployment and Service resources. In addition to GitHub Copilot, you can use Microsoft’s Kubernetes extension for VS Code to use IntelliSense and flag schema errors in the file.

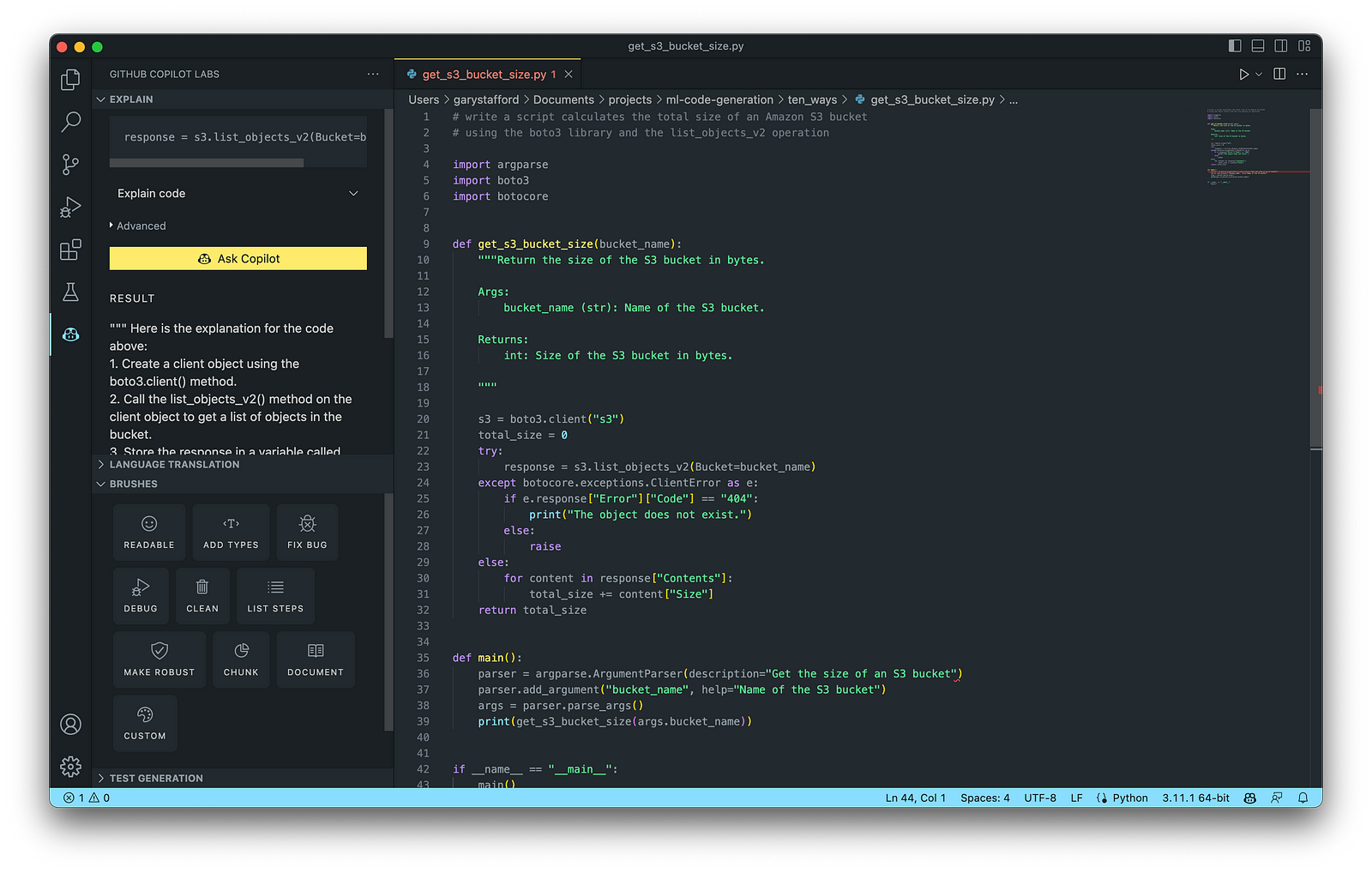

10. Utility Scripts

According to Bing AI — Search, utility scripts are small, simple snippets of code written as independent code files designed to perform a particular task. Utility scripts are commonly written in Bash, Shell, Python, Ruby, PowerShell, and PHP.

AWS utility scripts leverage the AWS Command Line Interface (AWS CLI) for Bash and Shell and AWS SDK for other programming languages. SDKs take the complexity out of coding by providing language-specific APIs for AWS services. For example, Boto3, AWS’s Python SDK, easily integrates your Python application, library, or script with AWS services, including Amazon S3, Amazon EC2, Amazon DynamoDB, and more.

An example of a Python script to calculate the total size of an Amazon S3 bucket, below, was inspired by 100daysofdevops/N-days-of-automation, a fantastic set of open source AWS-oriented automation scripts.

Conclusion

In this post, you learned ten ways to leverage Generative AI coding tools like GitHub Copilot for development on AWS. You saw how combining the latest generation of Generative AI coding tools, a mature and extensible IDE, and your coding experience will accelerate development, increase productivity, and reduce cost.

🔔 To keep up with future content, follow Gary Stafford on LinkedIn.

This blog represents my viewpoints and not those of my employer, Amazon Web Services (AWS). All product names, logos, and brands are the property of their respective owners.

Accelerate Software Development with Six Popular Generative AI-Powered Coding Tools

Posted by Gary A. Stafford in AI/ML, Cloud, Software Development on March 25, 2023

Explore six popular generative AI-powered tools, including ChatGPT, Copilot, CodeWhisperer, Tabnine, Bing, and ChatSonic

Introduction

Modern software systems continue to grow inherently more complex over time. We have evolved from bulky monoliths to loosely coupled, event-driven, fault-tolerant, stateless, serverless, cloud-native, real-time, microservices-based, API-first, continuously deployed distributed systems, festooned with CQRS, 2PC, DDD, EDA, DOMA, Sagas, BFFs, GraphQL, gRPC, micro-frontends, contract tests, and Hexagonal architectures. Generative AI-powered coding tools do not necessarily make a developer’s job easier — they assist developers in dealing with increasing system complexity.

This post examines six popular generative AI-powered coding tools, including chat-based OpenAI ChatGPT, Microsoft’s all-new Bing Chat, and ChatSonic, as well as IDE-based Tabnine, GitHub Copilot, and Amazon CodeWhisperer (Preview). In this post, each tool will assist with developing an identical program to complete a series of common tasks on AWS. We will then compare and contrast each tool’s ease of use and the resulting code accuracy and quality.

“Generative AI coding tools are a new class of software development tools that leverage machine learning algorithms to assist developers in writing code. These tools use AI models trained on vast amounts of code to offer suggestions for completing code snippets, writing functions, and even entire blocks of code.” (quote generated by ChatGPT)

The generative AI space is evolving at a breakneck pace. Tools continue to rapidly improve their AI models, add new features, and adjust pricing. In just the short time it took to research and write this article:

- OpenAI announced GPT-4 on March 14, 2023

- Microsoft announced new Bing running on GPT-4 on March 14, 2023

- Google’s Bard AI launched in Early Access on March 21, 2023

- GPT-4 was added to Azure’s OpenAI Service on March 21, 2023

- Writesonic ChatSonic revised its pricing model on March 21, 2023

- GitHub announced GitHub Copilot X on March 22, 2023

- OpenAI announced ChatGPT plugins on March 23, 2023

Generative AI

According to McKinsey & Company in their recent article, What is generative AI?, “Generative artificial intelligence (AI) describes algorithms (such as ChatGPT) that can be used to create new content, including audio, code, images, text, simulations, and videos. Recent new breakthroughs in the field have the potential to drastically change the way we approach content creation.”

Generative AI for Code Generation

According to Papers with Code, “Code Generation is an important field to predict explicit code or program structure from multimodal data sources such as incomplete code, programs in another programming language, natural language descriptions or execution examples. Code Generation tools can assist the development of automatic programming tools to improve programming productivity.” Similarly, according to MarketTechPost, “Generative AI technologies have led to a surge of interest and progress in code generation applications. These technologies use machine learning algorithms and natural language processing to assist developers in automating the time-consuming and laborious portions of coding.”

Common Features

Standard features of leading generative AI-powered coding tools include the following:

- Whole-line, full-function, and block code completion

- Natural language to code completion

- Code suggestions based on the model’s pre-trained dataset

- Context-aware recommendations based on your existing code

- Context-aware recommendations based on your code comments

- Native integration with popular IDEs

- Multi-language coding support

- Respond to follow-up instructions (resulting in refinement of code)

Benefits

The benefits of generative AI-powered code generation include the following:

- Accelerate application development

- Improve development productivity

- Decrease development costs

- Produce higher-quality, more consistent code

- Enable better code documentation and test coverage

- Learn new programming languages and coding techniques

- Contributes to the democratization of programming

Methods of Code Generation

As you explore different generative AI tools for writing code, you will likely develop techniques for generating effective code based on the tool.

Long-form Narrative Approach

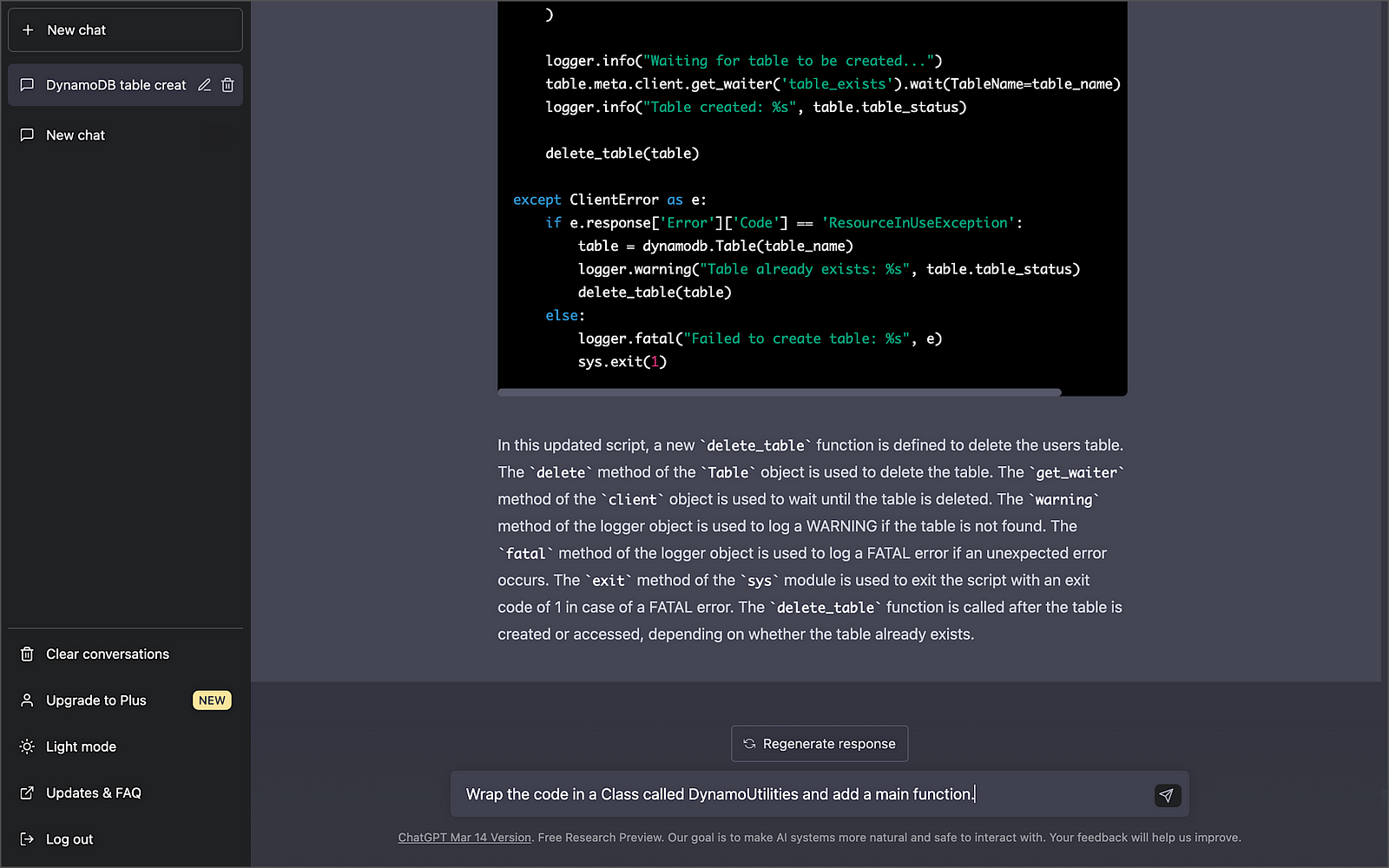

With chat-based tools like OpenAI ChatGPT, Microsoft Bing Chat, and ChatSonic, developers might use a longer narrative-type instruction (aka one-shot approach) rather than a series of more concise instructions to generate the code. For example:

- “Write a Python script to create a new DynamoDB table with a partition key of username, a sort key of last_name, and provisioned throughput of 5 RCUs and 5 WCUs. Pass the table name into the function as a variable. Wait until the table exists before continuing. Include try/except blocks and logging. If the table fails to be created, log a FATAL error and exit, and if the table already exists, log a WARNING. Add a main function and wrap everything in a Class called DynamoUtilities.”

- “Add three new functions, including a function to insert a new item into a table, a function to retrieve an item from a table, and a function to delete a table.”

Below is an example of OpenAI ChatGPT’s response using this approach. Of course, this method doesn’t limit you from following up with additional instructions to further enhance and refine the code.

Progressive Approach

Although the previous approach makes for an impressive demonstration, that method of writing code differs from how most developers work. Instead, a developer might use a progressive approach (aka chain of thought) to generate and refine the code with chat-based tools. In my tests with chat-based tools, I found a progressive approach of asking multiple, concise instructions to guide the model toward the desired behavior produced higher-quality code with fewer errors. For example:

- “Write a Python script to create a DynamoDB table with a partition key of username, a sort key of last_name, and provisioned throughput of 5 RCUs and 5 WCUs.”

- “Pass the table name into the create table function as a variable.”

- “Wait until the table exists before continuing.”

- “Add logging with the default level of INFO.”

- “Include try/except blocks, log a FATAL error and exit if the table fails to be created and a WARNING if the table already exists.”

- “Add a function to delete the table and wait until the table is deleted before continuing.”

- “Add a main function.”

- “Add a function to insert a new item into a table and a function to get an item from a table.”

- “Wrap the code in a Class called DynamoUtilities.”

- “Convert the Python code to Java.”

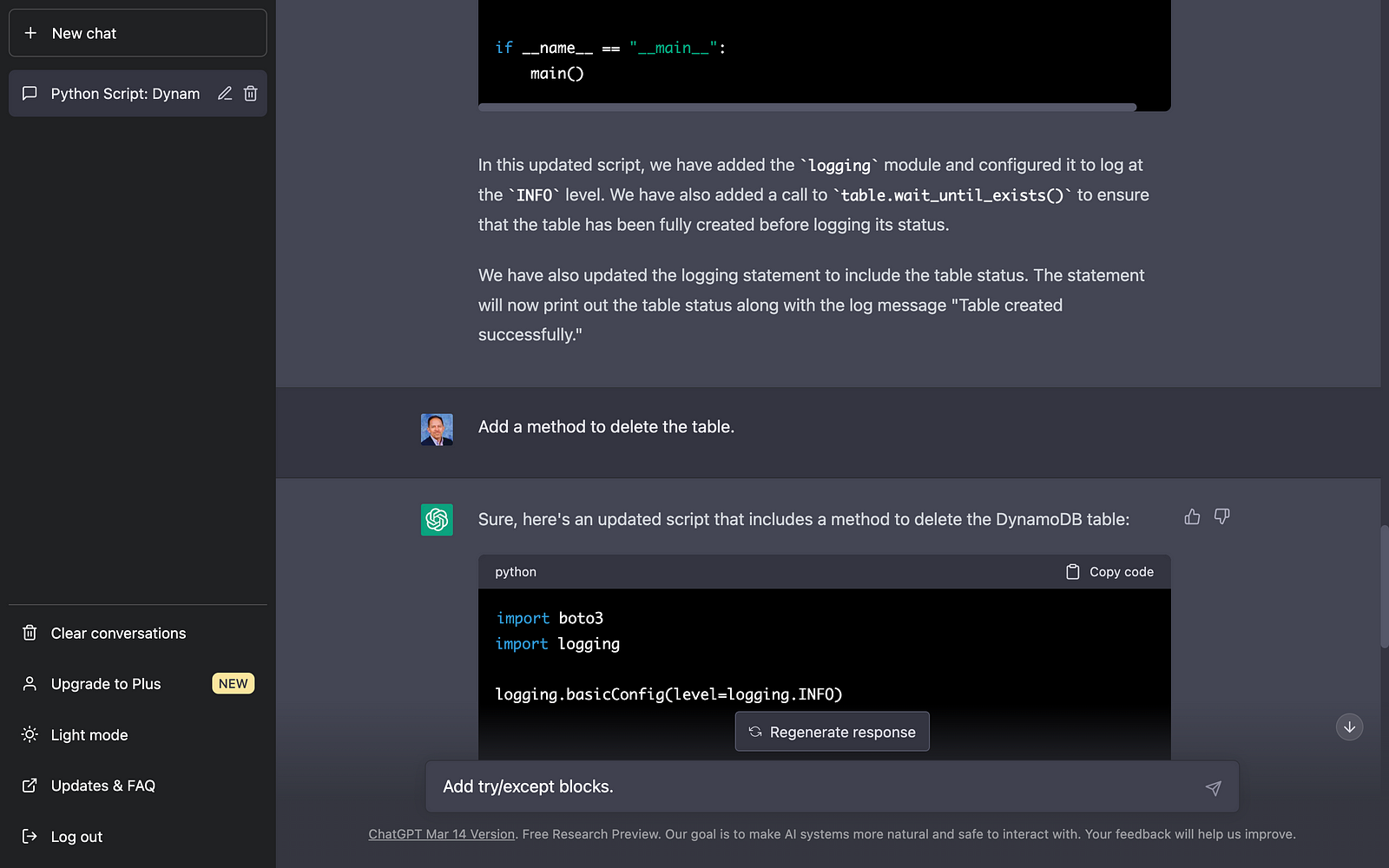

Below is an example of OpenAI ChatGPT’s response using a progressive code generation approach. The final code results from an initial prompt and a series of follow-up instructions to enhance and refine the code.

Be aware that most chat-based tools have a session limit. For example, Bing has a limit of 15 chats per session, which will limit the number of follow-up instructions you can use to modify the code. Additionally, the slightest variation in how an instruction is phrased may significantly impact the code generated. There is a whole science and blossoming industry around prompt optimization!

IDE-based Code Generation

Tabnine, GitHub Copilot, and Amazon CodeWhisperer use generative AI technology to predict and suggest new lines or blocks of code based on their trained pre-model and the context and syntax of existing code and code comments within your IDE. These tools differ from tools like OpenAI ChatGPT, Microsoft Bing Chat, and ChatSonic, which can generate code using a chat-based interaction with the user. IDE-based tools like Tabnine, GitHub Copilot, and Amazon CodeWhisperer are like having an AI-powered paired programming partner that enhances your development productivity. However, these tools also require a reasonable level of development experience, in my opinion.

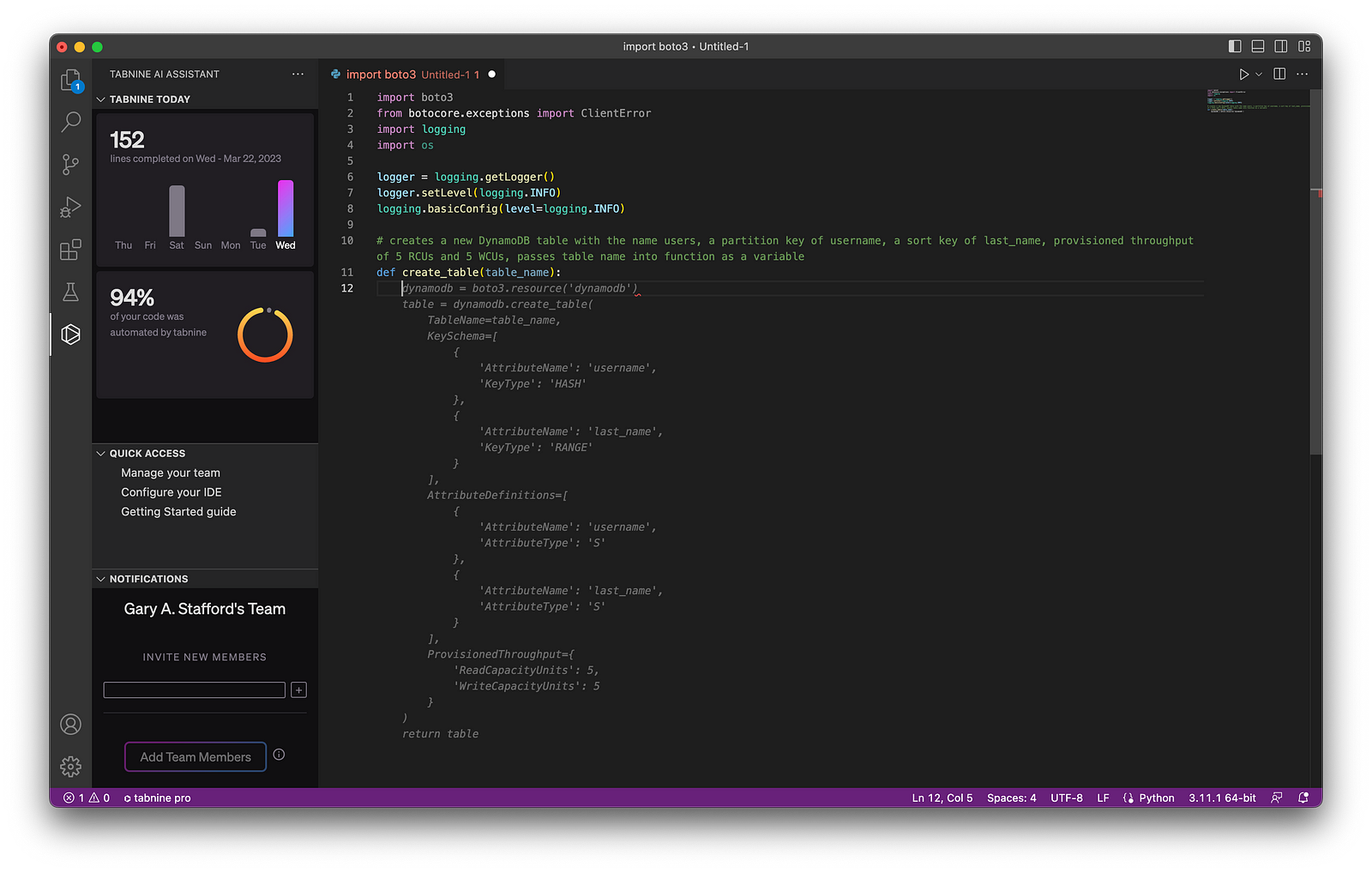

Below, we see an example of Tabnine Pro’s ability to generate whole-line code completion (line 11) and full-function code completion (lines 12–41) based on the existing code context and syntax (lines 1–11).

Although writing code comments may be perceived as easier than using generative code completion, many industry pundits dissuade what they call “ comment-driven development.” Instead, they believe using generative AI-based code completion results in superior results.

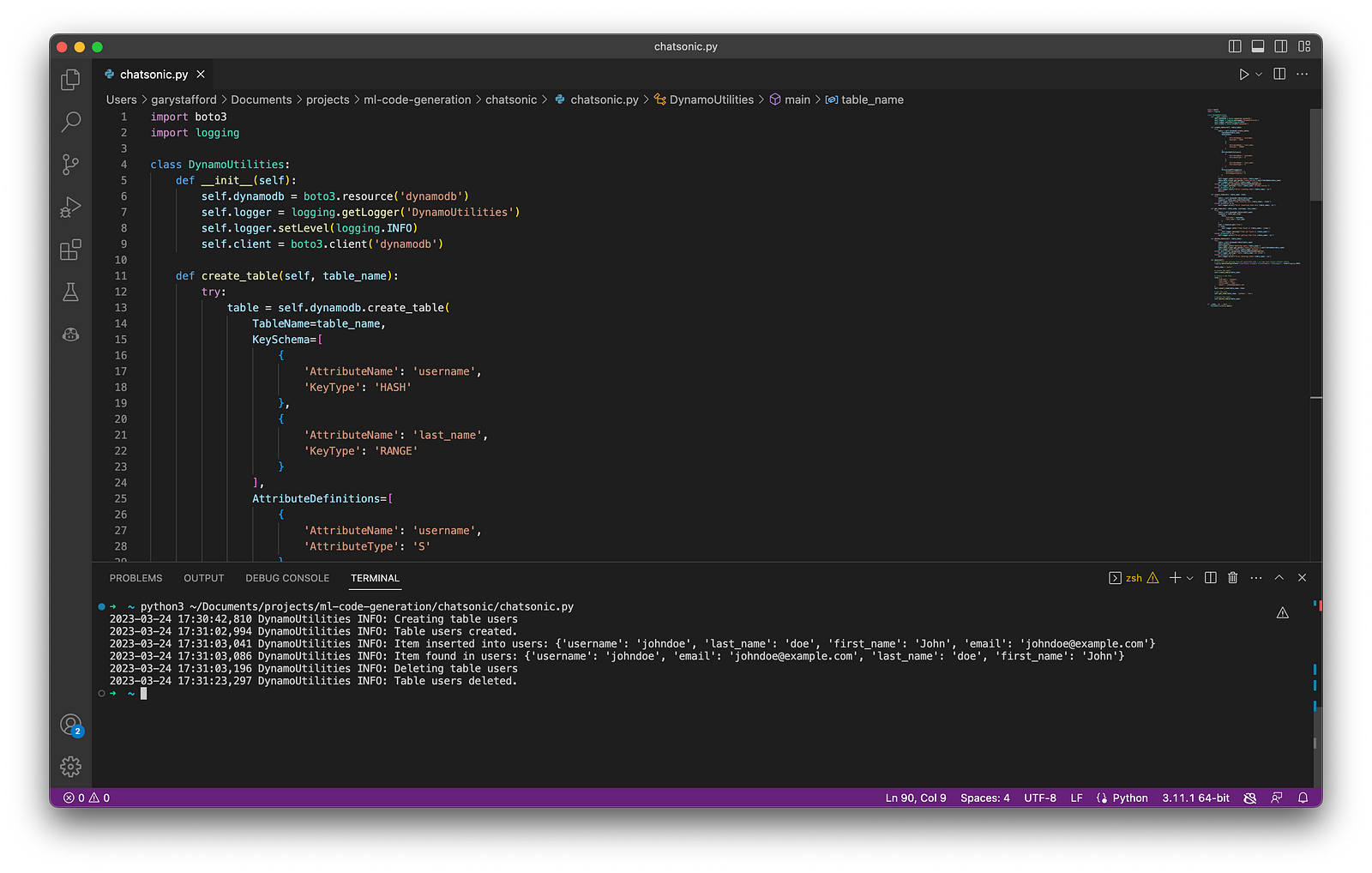

Testing Generative AI Tools

With the assistance of each tool, I have written a Python script to perform the following tasks: 1) create an Amazon DynamoDB table, 2) insert an item into a table, 3) retrieve an item from a table, and finally, 4) delete a table. Although I tested the results in multiple IDEs, I only included the results from Visual Studio Code (VS Code). Tool interactions and code results were consistent across IDEs.

As a reference for “what good looks like” when evaluating code accuracy and quality, I referenced the examples provided with AWS’s Boto3 SDK and DynamoDB API documentation and the PEP 8 — Style Guide for Python Code.

An example of the final code, written with the assistance of GitHub Copilot, including unit tests, is available on GitHub.

OpenAI ChatGPT

According to Wikipedia, ChatGPT (Generative Pre-trained Transformer) “is an artificial intelligence chatbot developed by OpenAI and launched in November 2022. It is built on top of OpenAI GPT-3 and GPT-4 [released March 14, 2023] families of large language models [LLMs] and has been fine-tuned (an approach to transfer learning) using both supervised and reinforcement learning techniques.”

According to the VC-backed California-based startup OpenAI, “ChatGPT interacts in a conversational way. The dialogue format makes it possible for ChatGPT to answer follow-up questions, admit its mistakes, challenge incorrect premises, and reject inappropriate requests.” Further, “ChatGPT was optimized for dialogue using Reinforcement Learning with Human Feedback (RLHF), which uses human demonstrations and preference comparisons to guide the model toward desired behavior.”

Getting Started with ChatGPT

You can get started with ChatGPT for free using the Free Plan. However, if you want to use the latest generative AI models, get faster results, and ensure availability, you should consider ChatGPT Plus for $20/month. GPT-4 is now available through the ChatGPT Plus Plan.

ChatGPT’s Free Plan is a great way to test the generative AI waters. However, due to potentially slow chat responses and unavailability during peak times, you will want to upgrade to ChatGPT Plus if your team plans to rely on the tool.

Using ChatGPT

OpenAI ChatGPT is available through a web-based chat interface or programmatically using OpenAI APIs. For API users, OpenAI provides online API references, code examples, and an interactive coding playground. According to OpenAI, “The OpenAI API can be applied to virtually any task that involves understanding or generating natural language, code, or images.”

Below is an example of output using ChatGPT’s UI. Using the UI, ChatGPT’s results are a mix of code blocks and explanatory text. ChatGPT has a straightforward, clean, and functional web-based user interface. There is a button to copy the resulting code to your clipboard, and previous chat sessions are saved and accessible.

ChatGPT Results

In my tests, using a progressive chat approach to building the code with concise instructional questions versus a longer-form narrative approach produced better-quality code. The code created using a progressive chat approach was accurate, error-free, and well-written.

A significant advantage of ChatGPT is its ability to remember what happened earlier in the conversation. According to OpenAI documentation, the model can reference up to approximately 3k words (or 4k tokens) from the current conversation; any information beyond that is not stored.

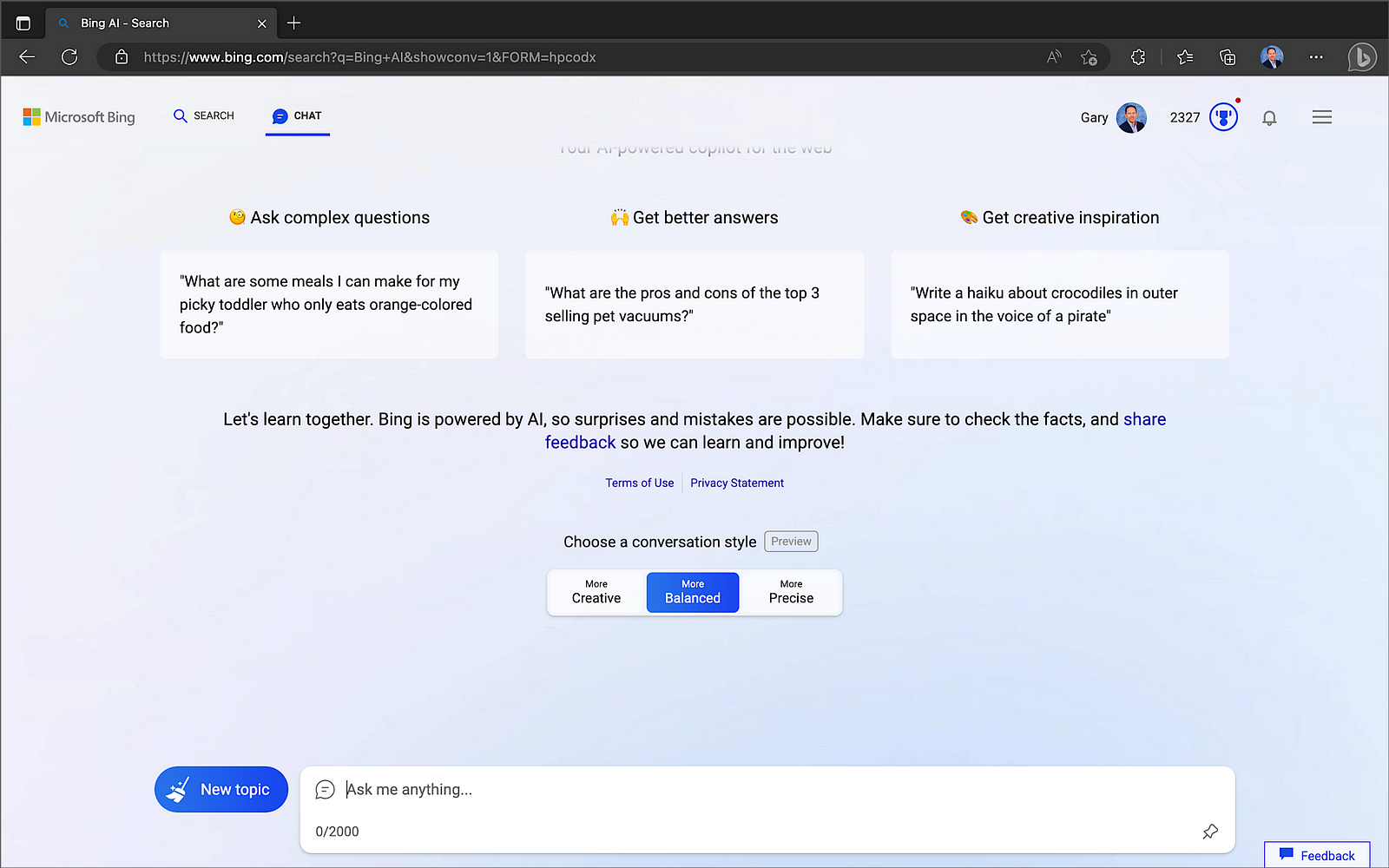

Microsoft Bing Chat

According to their blog post on February 7, Microsoft launched “an all new, AI-powered Bing search engine and Edge browser, available in preview now at Bing.com, to deliver better search, more complete answers, a new chat experience and the ability to generate content. We think of these tools as an AI copilot for the web.” The latest version of Bing “is running on a new, next-generation OpenAI large language model that is more powerful than ChatGPT and customized specifically for search. It takes key learnings and advancements from ChatGPT and GPT-3.5 — and it is even faster, more accurate and more capable.” On March 14, Microsoft confirmed the new Bing runs on OpenAI’s latest GPT-4.

Getting Started with Bing Chat

Bing’s Chat mode is only available when you access Bing using Microsoft Edge.

Microsoft Edge is available for most major platforms, including MacOS, iOS, and Android.

Using Bing Chat

Bing Chat now supports up to 15 chats per session and 150 per day. Users can supply up to 15 prompts (questions or instructions) to create and improve the code results. When responding, Bing considers the context of previous prompts in the same chat session.

Bing Chat Results

Like OpenAI ChatGPT, Bing Chat delivered accurate, error-free, and well-written code. Although ChatGPT and Bing Chat are not IDE-based tools, the resulting code is easily copied into your project or saved to disk. Additionally, these tools provide an excellent opportunity to learn new languages and coding techniques without requiring an IDE.

ChatSonic

ChatSonic is a service of Writesonic. According to Y Combinator, startup Writesonic, founded by Samanyou Garg, is backed by leading venture capital firms, including Y Combinator, HOF Capital, Rebel Fund, Soma Capital, Broom Ventures, Amino Capital, and some of the best angels from different industries.

ChatSonic claims to improve upon the limitations of ChatGPT. According to Wordsonic, ChatSonic is “a powerful chatbot, powered by Google Search, allowing it to provide up-to-date and accurate content and hence superior to ChatGPT, which can not generate content on topics after September 2021. Additionally, it can create digital images and respond to voice commands and many more additional features. So, ChatSonic has addressed all the limitations of ChatGPT.”

Using ChatSonic

You can get started with ChatSonic for free, with up to 10,000 words. However, like OpenAI ChatGPT, if you want to take advantage of GPT-4, you will want to upgrade to a paid plan, starting at $13/month with an annual commitment.

Using ChatSonic is nearly identical to using OpenAI ChatGPT.

During my testing for this article, I ran into several issues with ChatSonic’s Premium Plan’s Free Trial. For example, the code block constantly flashed and refreshed on the screen using Chrome for Mac; a minor issue but highly annoying. Also, when copying or downloading code results, you get both code and the informational text, which you must separate. Worse, the final code block did not always include all the code output; the code was intermixed with informational text. Lastly, when using follow-up instructions to modify the results, the regenerated code often missed previous code modifications. In my tests, after several follow-up instructions, the resulting code was missing up to one-third of the previous statements. Due to these issues, the resulting code block was malformed and unrunnable. The more I chatted with ChatSonic, the worse the code issues became, and the issues were repeatable.

ChatSonic Results

Unlike ChatGPT and Bing Chat, ChatSonic’s Premium Plan’s Free Trial did not deliver accurate, error-free, or well-written code during my tests. Although later tests with fewer follow-up instructions provided better though incomplete results, my first impressions were unfavorable. The results might be improved using ChatSonic’s Superior or Ultimate Plans, which use the newest GPT-4 model. However, given the results of Premium, I was not keen to invest in the paid plan to find out.

Tabnine

According to the VC-backed, Israel-based startup Tabnine, “Tabnine is to create and deliver a top-to-bottom AI-assisted development workflow that empowers all code creators, in all languages, from concept through to completion.” Tabnine Pro serves whole-line, full-function, and natural language to code completions. Regarding AI, in Tabline’s opinion, “a multi-model approach far outperforms a monolithic approach. That’s why we’ve developed language-specific code native AI models, which are pre-trained on code and provide faster and more accurate code completions based on your tech stack.” To learn more about their models, read Tabnine’s blog post, Tabnine Enterprise vs. ChatGPT Plus.

Getting Started with Tabline

You can get started with Tabline for free with the Starter plan. However, the features of the Starter plan are limited compared to the Pro Plan, which starts at $12/month for a single user.

You can try out the Pro plan’s features, which are free for 14 days.

Tabnine supports a wide range of programming languages.

To get started with Tabline, choose your favorite IDE and install the required extensions or plugins.

Tabnine provides automated or manual IDE installation.

The Tabnine extension for VS Code already has a staggering 5.1 million downloads! A valuable tip learned from testing the IDE-based tools was to disable all non-essential extensions to give me a baseline of the tool’s capabilities. Initially, with over eighty VS Code extensions enabled, I found it hard to separate and understand each tool’s features and potential conflicts with other extensions I had loaded, especially other generative AI tools.

Using Tabnine

As discussed earlier, Tabnine, like GitHub Copilot and Amazon CodeWhisperer, uses generative AI technology to predict and suggest subsequent lines of code based on context and syntax within your IDE. It is like having IntelliSense on steroids.

Like Copilot and CodeWhisperer, it takes some practice to get efficient with Tabnine. After starting with the initial import and logging statements, I used code comments to generate the functions, then returned to add exception-catching and logging.

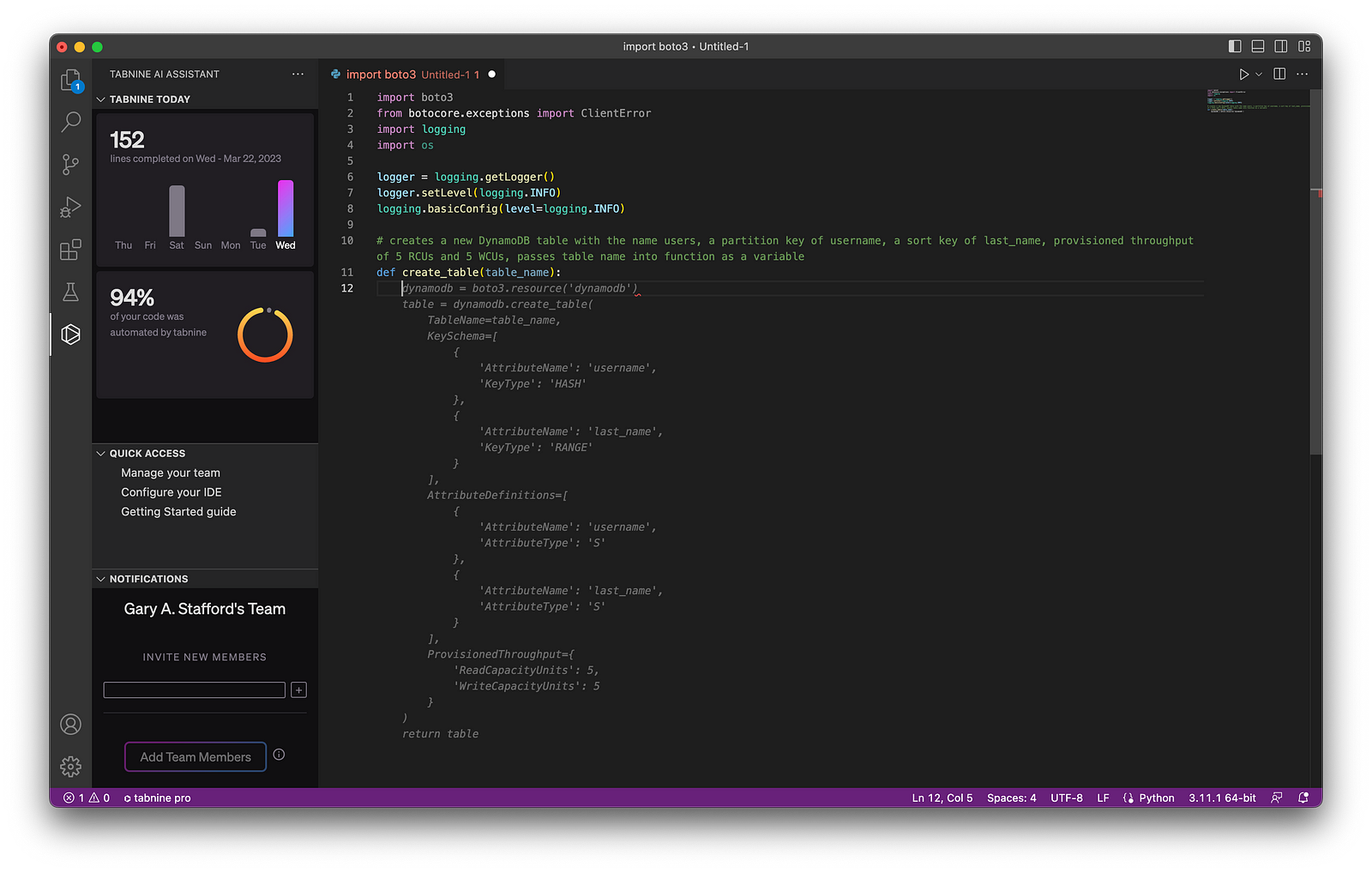

Tabnine, like Copilot and CodeWhisperer, includes a collapsible navigation bar. However, unlike those tools, I found Tabnine’s navigation bar provided little value, in my opinion. It seemed more like marketing stats and a way to encourage more users to sign-up rather than providing useful development features like Copilot and CodeWhisperer.

Below, we see an example of Tabnine Pro’s ability to generate whole-line code completion (line 11) and full-function code completion (lines 12–41) based on the existing code context and syntax (lines 1–11).

Tabnine Results

Given my development knowledge and experience with similar tools, I found the final code results with Tabnine Pro were accurate, error-free, and well-formed (Pythonic). However, I would have liked to see additional features to differentiate Tabnine from its competitors.

GitHub Copilot

According to GitHub, “Copilot is an AI pair programmer that offers autocomplete-style suggestions as you code. You can receive suggestions from GitHub Copilot either by starting to write the code you want to use or by writing a natural language comment describing what you want the code to do. In addition, GitHub Copilot analyzes the context in the file you are editing and related files, and offers suggestions from within your text editor.” Copilot is powered by OpenAI Codex, a new AI system created by OpenAI.

Given the vast popularity and volume of code on GitHub, it is no wonder Copilot is trained in all languages that appear in GitHub’s public repositories. GitHub points out that the quality of suggestions you receive may depend on the volume and diversity of training data for that language.

GitHub Copilot X

GitHub is extending Copilot’s capabilities, with the announcement of GitHub Copolit X on March 21, 2023. According to GitHub, “With chat and terminal interfaces, support for pull requests, and early adoption of OpenAI’s GPT-4, GitHub Copilot X is our vision for the future of AI-powered software development. Integrated into every part of your workflow.”

Getting Started with Copilot

Get started with GitHub Copilot for as little as $10/month. This Monthly Plan is a great way to test Copilot’s capabilities. In addition, GitHub is currently offering a 60-day free trial of Copilot.

Regarding IDE support, Copilot is currently available as an extension in Visual Studio Code, Visual Studio, Neovim, and the JetBrains suite of IDEs. The GitHub Copilot extension already has 4.2 million downloads, and the GitHub Copilot Nightly extension, used for this post, has almost 250,000 downloads.

GitHub Copilot can also be used with Codespaces, GitHub’s fully configured development environments in the Cloud based on VS Code, similar to AWS Cloud9.

Using Copilot

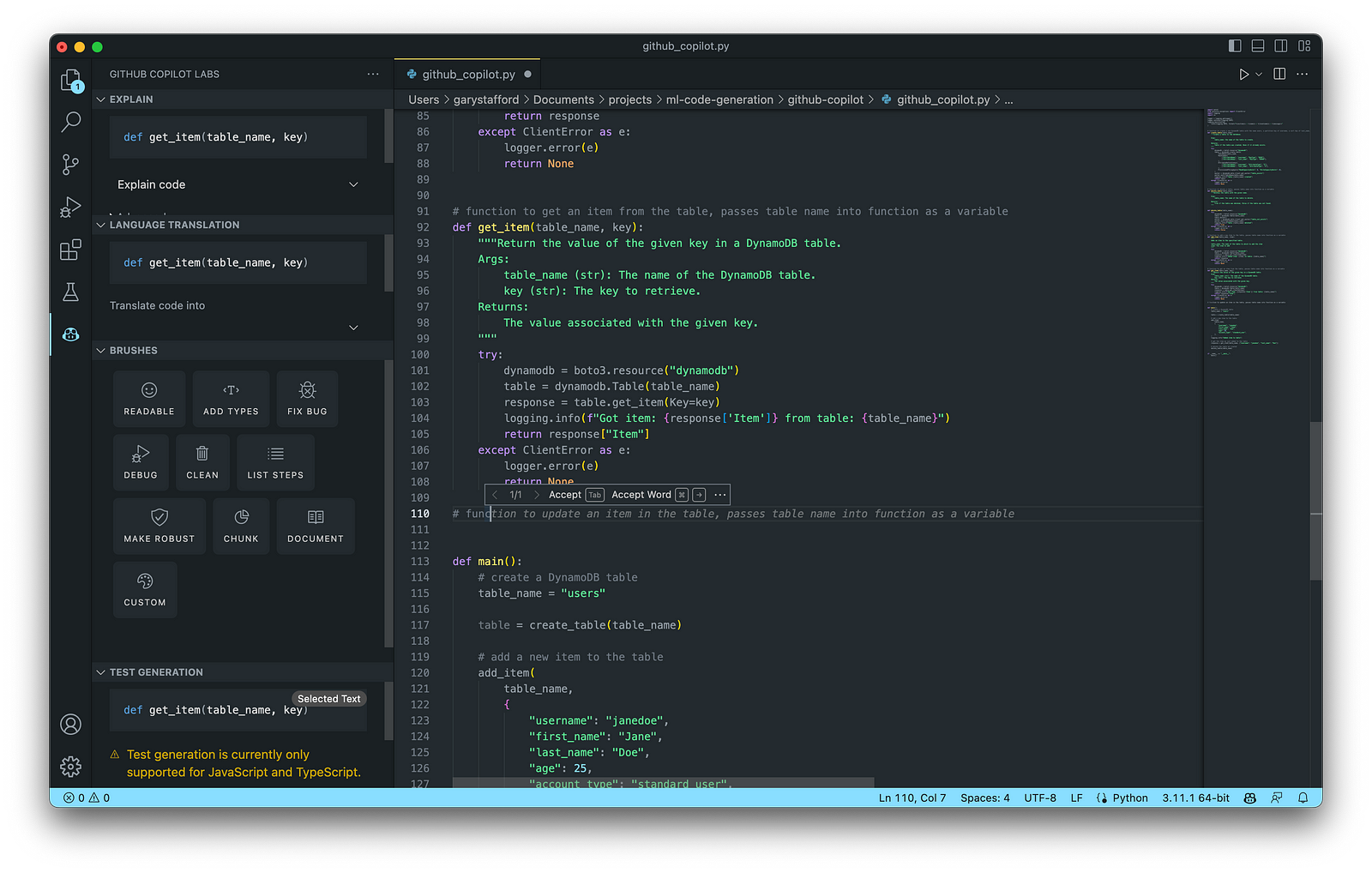

As discussed earlier, GitHub Copilot, like Tabnine and Amazon CodeWhisperer, uses generative AI technology to predict and suggest new lines of code based on existing context and syntax. In addition, Copilot includes an inline pop-up toolbar, which makes it easier to scroll through and accept code choices.

Copilot also has an extensive collapsible navigation bar, which can explain the code, offer language translations, provide access to Copilot’s Brushes, and can even generate tests. My only disappointment with Copilot is that test generation is currently only supported for JavaScript and TypeScript, given Python’s enormous popularity.

Below, we see an example of Copilot’s ability to generate whole-line code completion (line 14) and full-function code completion (lines 15–34) based on the existing code context and syntax (lines 1–14).

Copilot Results

Given my development knowledge and experience with similar tools, the final code results with Copilot were accurate, error-free, and well-formed. I found Copilot’s additional features, especially those found on its collapsible navigation bar, extended its functionality well beyond most similar generative AI tools I tested. Lastly, I felt Copilot’s ability to learn from the existing code context and syntax was superior to other tools.

Amazon CodeWhisperer (Preview)

According to AWS, Amazon CodeWhisperer, announced in June 2022, “is a general purpose, machine learning-powered code generator that provides you with code recommendations, in real time. As you write code, CodeWhisperer automatically generates suggestions based on your existing code and comments. Your personalized recommendations can vary in size and scope, ranging from a single line comment to fully formed functions.” CodeWhisperer code generation is powered by ML models trained on various data sources, including Amazon and open-source code.

CodeWhisperer currently supports Java, JavaScript, Python, C#, and TypeScript. Although these are some of the most popular languages, CodeWhisperer’s language support is limited compared to Tabnine and Copilot.

Getting Started with CodeWhisperer

It is easy to get started with CodeWhisperer. Unfortunately, final pricing is unavailable since Amazon CodeWhisperer is still in Preview as of late March 2023.

CodeWhisperer enhances your IDE using AWS Toolkit for JetBrains, AWS Toolkit for Visual Studio Code, AWS Lambda console, and AWS Cloud9. With Lambda and AWS Cloud9, the setup simply involves activating CodeWhisperer within the IDE.

Getting started with CodeWhisperer requires installing the AWS Toolkit if applicable, choosing your authentication method, and setting up your Builder ID, IAM Identity Center (AWS SSO), or IAM.

AWS Builder ID

According to the documentation, “the AWS Builder ID is a new personal profile for everyone who builds on AWS. Your AWS Builder ID provides access to tools and builder services on AWS, including Amazon CodeCatalyst and Amazon CodeWhisperer. You can keep your AWS Builder ID as you move between jobs, schools, or other organizations. AWS Builder IDs are free. You only pay for the AWS resources you consume in your AWS accounts.”

Using CodeWhisperer

Nearly identical to Copilot and Tabnine, Amazon CodeWhisperer provides real-time code recommendations in your IDE. Unique features of CodeWhisperer include first-class support for AWS APIs, built-in security scans, a code reference tracker, and responsible AI/ML, which avoids bias by filtering out code recommendations that might be considered biased and unfair.

Below, we see an example of CodeWhisperer’s ability to generate whole-line code completion (line 14) and full-function code completion (lines 15–27) based on the existing code context and syntax (lines 1–14).

CodeWhisperer Results

Like my feedback on Tabnine and Copilot, the final code results with CodeWhisperer were accurate, error-free, and well-formed. In addition, I appreciated the added benefits of CodeWhisperer’s Security Scan and Reference Tracker to identify significant code from other projects.

Other Options

There are many other tools in the Generative AI coding category of comparable quality to the six featured in this post. New tools are being released on an almost daily basis.

Google Bard

On March 21, 2023, Google announced limited access to Bard, an early experiment that lets you collaborate with generative AI. They are beginning with the U.S. and the U.K. and will expand to more countries and languages over time. Bard is based on Google’s LaMDA (Language Model for Dialogue Applications), a transformer-based model.

Azure OpenAI Service with GPT-4

On March 21, 2023, Microsoft announced that GPT-4 was available in preview in Azure OpenAI Service. Unfortunately, Azure OpenAI Service requires registration and is currently only available to approved enterprise customers and partners. To get started, if you are qualified, you must trudge through the 25-question form.

Replit Ghostwriter

California-based VC-backed startup Replit has put the power of Replit’s AI into the world’s most popular online IDE. Replit “automates away the repetitive parts of coding, so you can stay focused on making your creative vision a reality.” Replit announced Ghostwriter Chat, which allows you to chat with a coding AI directly in your IDE. It now has a proactive debugger and awareness of your project’s code.

Conclusion

In this post, we examined six popular generative AI-powered coding tools, including chat-based OpenAI ChatGPT, Microsoft’s all-new Bing Chat, and ChatSonic, as well as IDE-based GitHub Copilot, Amazon CodeWhisperer (Preview), and Tabnine. Each tool assisted with developing an identical program to complete everyday database tasks on AWS. We then compared and contrasted the ease of use and the resulting code accuracy and quality of the tools. The generative AI space is moving at a breakneck pace. Tools continue to rapidly improve their AI models, add new features, and adjust pricing.

Recommended References

- Article: Top Artificial Intelligence (AI) Tools That Can Generate Code To Help Programmers (Marktechpost)

- Article: Top 29 ChatGPT alternatives that will blow your mind in 2023 (Free & Paid) (Writesonic)

- Research Paper: Deep Learning for Source Code Modeling and Generation: Models, Applications and Challenges (Triet H. M. Le, Hao Chen, M. Ali Babar)

- Article: NLP vs. NLU vs. NLG: the differences between three natural language processing concepts (IBM)

- Article: Top 17 Generative AI-based Programming Tools (For Developers) (Toward AI)

🔔 To keep up with future content, follow Gary Stafford on LinkedIn.

This blog represents my viewpoints and not those of my employer, Amazon Web Services (AWS). All product names, logos, and brands are the property of their respective owners.